The U.S. Government Banned an AI Company. That's Your Family's Civics Class in the Age of AI

DISCOVERING AI: Igniting Human Potential

By Amy D. Love, Founder of DISCOVERING AI and of the Global FAMILY AI GAME PLAN initiative

More than 500,000 people have already read the essay "Something Big Is Happening. Rethink What You're Telling Your Kids." This week's standoff just made it more urgent.

Last week, the U.S. government ordered every federal agency to immediately stop using technology from Anthropic, one of the world's leading AI companies. The Department of Defense went further, labeling Anthropic a national security supply chain risk. That is a designation normally reserved for foreign adversaries like China and Russia.

What did Anthropic do to earn that? It refused to let the government use its AI for mass domestic surveillance of Americans or for fully autonomous weapons.

Hours after Anthropic was shut out, rival company OpenAI announced it had struck a deal with the Pentagon. OpenAI's CEO Sam Altman said his company had the same red lines as Anthropic on surveillance and autonomous weapons. The difference was in how each company chose to fight for them.

One company drew a hard line and lost the contract. Another negotiated a deal and kept its seat at the table. Both said they stood for the same values.

This is the kind of story that gets covered as a business headline or a political fight. It is actually something else entirely.

It is a civics lesson. And most kids have no idea it is happening.

What Civics Actually Looks Like Now

Think about what this story is really asking.

Who gets to set the ethical limits for powerful technology? Should a private company be able to write its own moral red lines into a government contract? Or should elected officials and military leaders make those calls?

There is no clean answer. Reasonable people disagree. That is exactly what makes it civics.

Who gets to set the limits for powerful technology? Should companies police themselves? Or should governments decide?

Kids growing up today will eventually vote, work, and lead in a world where these questions come up constantly. They need to understand what is at stake. That is why this moment matters for your family.

Law Is Not the Same as Judgment

Parents teach this lesson every single day, often without realizing it.

Just because you can do something does not mean you should.

Artificial intelligence now applies that principle at a massive scale. A tool might allow something. The law might permit it. The technology might make it easy.

Judgment determines whether it is actually a good idea.

That distinction will define what leadership looks like in the Age of AI. Children need to learn it early.

The First Generation That Cannot Automatically Trust What It Sees

Here is something worth sitting with.

This is the first generation in human history that cannot automatically trust what it sees or hears.

Images can be created from nothing. Voices can be cloned. Videos can be faked. Kids must now ask a question that previous generations never had to ask:

Is this persuasive, or is this real?

Most students have never been taught how AI actually works. These systems are not thinking. They are prediction engines. They generate the most statistically likely response based on patterns in their training data. They can produce impressive answers. They can also produce confident mistakes.

Understanding that difference is not a bonus skill. It is the foundation of modern digital literacy.

Adoption Is Already Happening

When speaking to students across the country, a simple question gets asked: Who has used ChatGPT?

Almost every hand goes up. Every time.

Inner city students in Birmingham. Private school students in Silicon Valley. Catholic high school students in the Midwest. The response is the same everywhere.

AI is already woven into how kids learn and work every day. The habits are forming right now.

The only question is whether we are shaping those habits intentionally.

The Social Media Lesson

Many families cannot pinpoint the exact moment when social media rewired their teenager's life.

There was no announcement. No national conversation beforehand. Platforms simply appeared in kids' hands, and over time they reshaped attention spans, identity, and social comparison before anyone figured out the guardrails.

History delivered a clear verdict: adoption happened before alignment.

Artificial intelligence gives us a new chance to choose differently.

The Question Parents Must Start Asking

For decades, education relied on a simple signal. Homework produced artifacts. Essays, worksheets, and projects showed evidence that learning had happened.

AI has scrambled that signal.

An essay can be generated in seconds. A math problem can be solved step by step on demand. Finishing the assignment no longer proves understanding happened.

The parental question is changing. It is no longer:

Did you finish your homework?

It is now:

How did you do your homework?

Did you wrestle with the ideas? Did you push back on what the AI told you? Did you use the tool to actually understand something better, or did you just copy the answer?

The shift is from completion to cognition.

Alignment Across Parents, Teachers, and Students

Technology will keep moving fast. Schools are adapting as quickly as they can. Families cannot wait for perfect policy before starting conversations at home.

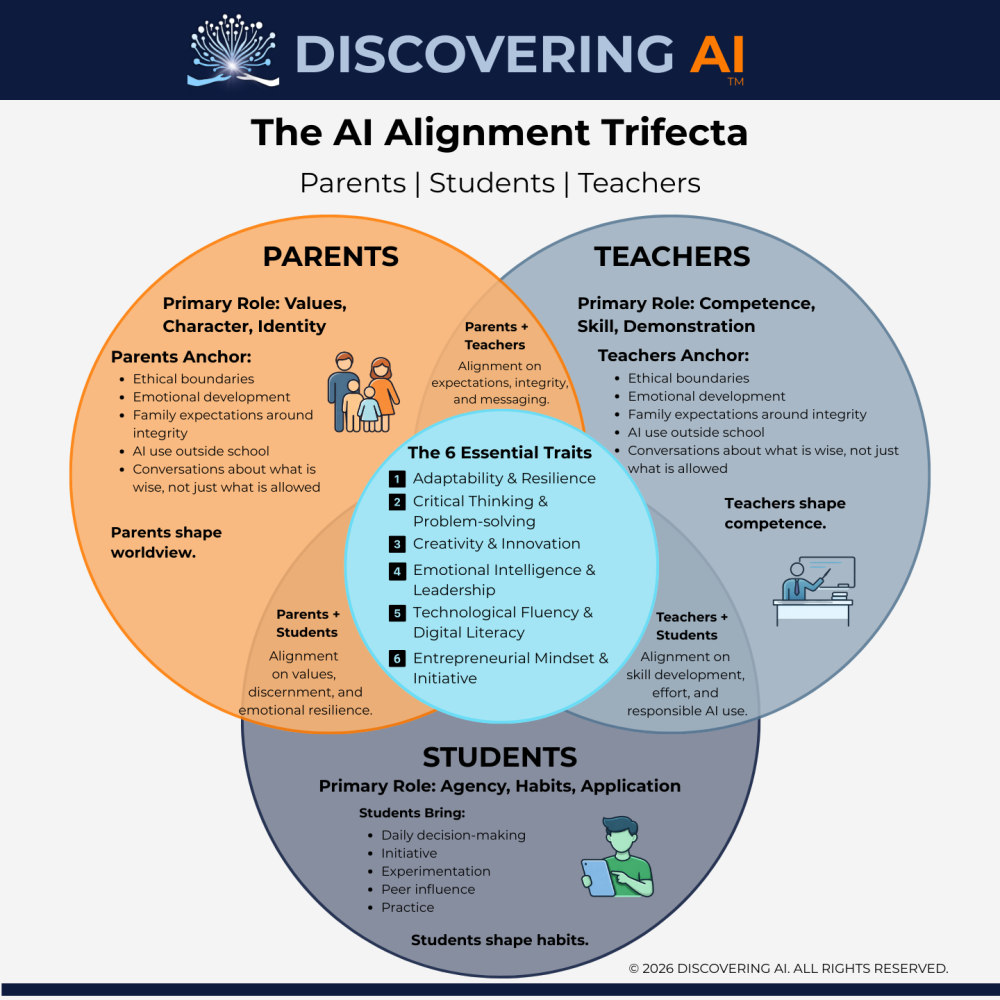

Children operate in three overlapping environments. Parents shape values. Teachers shape competence. Students shape their own daily habits.

When those three groups send different signals, confusion follows.

A teacher bans AI use.

A parent quietly allows it.

A student learns to work around both.

Integrity erodes. Alignment changes that. Shared expectations create clarity.

This is what that alignment looks like.

Over the past year, I have heard versions of the same story from parents across middle school, high school, and college. The details change. The emotion does not.

A student submits honest work. A teacher suspects AI use. A warning is issued to an entire class. Grades may change retroactively. Conversations escalate quickly. That night, the student is no longer asking whether they learned something. They are asking how to prove they did not cheat.

That shift matters.

When conversations about AI and learning start after a problem, everyone is on defense. Students feel accused. Parents feel unprepared. Teachers feel overwhelmed. Schools feel exposed. Fear fills the gap where clarity should have been.

This is not how academic integrity is upheld. It is how trust erodes.

At the same time, there is a truth we cannot avoid. Some students are using AI to shortcut learning. Educators are right to protect the integrity of their classrooms. Learning still matters. Effort still matters. Growth still matters.

What is breaking down is not values.

What is breaking down is language.

For generations, we asked one simple question: Did you get your homework done?

That question assumed effort was visible. It assumed process could be inferred from product. In the Age of AI, those assumptions no longer hold.

Answers are easy now. Process is not.

That is why the most important question has quietly changed.

Not, “Did you get your homework done?” The question that actually matters now is, “How did you get your homework done?”

That single shift changes everything.

When we ask how, students are invited to explain their thinking. Teachers gain insight into learning, not just outcomes. Parents can guide values instead of policing tools. Conversations move from accusation to understanding.

When we only ask did, we invite shortcuts and suspicion.

Over the past year, I have watched students quietly take 10% grade penalties out of fear, not guilt. I have watched families struggle to advocate because they do not understand the tools well enough to explain what their child actually did. I have watched teachers try to enforce rules that were never designed for this moment.

I have also heard something else, increasingly often. Parents saying, quietly and honestly, “I did not realize how serious this was until it happened to us.”

That is a very human response. AI feels abstract until academic integrity becomes personal. By the time it does, the conversation often starts in fear instead of clarity.

Fear is a terrible learning environment.

Schools are now navigating a reality where unclear expectations create anxiety for students and legal exposure for institutions. Parents are realizing they can no longer outsource engagement. Teachers are being asked to evaluate work created in ways they were never trained to assess.

Trying to solve this with detectors, retroactive accusations, or silence will not work.

What does work is alignment.

Clear expectations, shared language, and early conversations between home and school. Transparency about what tools are allowed, how they can be used, and what learning actually looks like now. Space for students to explain their process. Guidance that treats AI as a learning support to be used intentionally, not a shortcut to be hidden.

This is why, in the Age of AI, parenting is no longer passive. It is participatory.

Parents do not need to be AI experts. They do need to be engaged. They need to ask better questions. They need to help their children understand the difference between using a tool to think and using a tool to replace thinking.

Educators should not be expected to navigate this alone. Academic integrity is strengthened through clarity and partnership, not fear.

As we begin a new year and students return to classrooms, syllabi, and assignments, I invite you to listen for the questions being asked.

If the only question is whether homework was done, we will continue to see confusion, anxiety, and mistrust.

If we learn to ask how learning happens, we create space for honesty, growth, and integrity.

The future of learning will not be defined by the tools students use. It will be defined by how intentionally we guide them.